Blockchain technology has the characteristics of data anti-tampering and anti-forgery, which can provide solution ideas for the secure storage and transmission of data in distributed networks. The study applies blockchain technology to data auditing, constructs an aggregated signature based on conditional identity anonymization to protect user privacy, simplifies the auditing computation by using homomorphic hash function, and deploys three kinds of smart contracts on the blockchain to design a blockchain-based data integrity auditing scheme. For the privacy protection problem, a blockchain privacy protection model based on differential privacy is constructed by integrating the differential privacy policy into the blockchain smart contract layer. The experimental results show that the data integrity auditing scheme has superior blockchain storage cost and time overhead, and the average time overhead under different dynamic operations is below 30ms. The privacy protection model also exhibits high efficiency, with encryption and decryption times of 0.075s and 0.063s, respectively, under the largest data file, and a significant speed advantage in all phases of operation. The proposed scheme in this paper meets the needs of data integrity and privacy protection, and can provide efficient services for users.

With the advent of the big data era, the growing volume of data has also brought new challenges to the secure storage and sharing of data. In the past, users were accustomed to storing their personal data in third-party data storage platforms, which were managed and maintained by centralized servers. However, the third-party platform is semi-trustworthy, users cannot control the platform’s use of data stored in the platform, there is a possibility of data misuse, and the centralized platform is prone to data loss due to a single point of failure [7,20,27]. Therefore, the traditional centralized data management model lurks a huge risk of privacy leakage and is in urgent need of transformation.

In contrast, distributed network is a network environment composed of computer nodes distributed in different geographical locations [15]. Data is jointly maintained by multiple independent storage servers in the network, which not only avoids the single point of failure problem in the centralized storage system, reduces the risk of user data loss, and improves the reliability of the data management system [19,14,5]. In addition, nodes outside the network can also be configured to become a member of the distributed network with strong availability and scalability [3,25]. The peer-to-peer network represented by blockchain network is a typical distributed network widely used in the field of user data security protection research.

Blockchain is a distributed database technology realized through a variety of technologies such as public key cryptography algorithms, hashing algorithms, consensus mechanisms, and distributed storage technologies [6,18]. The distributed database of blockchain has more security advantages compared to the centralized system. In blockchain system, even if a specific node fails, the data is still guaranteed to be complete and not tampered with [22,13]. The structure without third-party intermediaries also promotes data security and integrity as each transaction in a blockchain is based on a consensus established by the nodes of the entire blockchain network and one no longer needs to assess the trustworthiness of intermediaries or other participants in the network [10,8,1].

Many data in today’s society have non-negligible commercial value, and the security of user data should not completely hope on the third-party application platform, a large number of scholars research on privacy data protection schemes. Literature [16] introduces the privacy security risk of cloud computing, on the basis of which it compares numerous privacy security protection techniques, including access control techniques, attribute-based encryption techniques, etc., and analyzes the characteristics and scope of application of typical schemes supported by technology. Literature [21] addresses the online social network (OSN) privacy protection problem, proposes to construct a security prediction model based on neural network, hybrid recursive genetic algorithm and radial basis function, and adopts attribute-based encryption scheme to encrypt the preprocessed OSN information, and further improves the security of the privacy data using particle swarm optimization algorithm. Literature [9] utilizes homomorphic encryption scheme to achieve secure data aggregation of ciphertexts in elected central nodes, which are generated in Device-to-device network environment by relying on the reliability-based central node election mechanism ordering. Literature [26] proposes a privacy-preserving scheme for social networks (PPSSN) based on categorical attribute encryption, which balances the privacy and security of data distribution by categorizing users and designing access control with different users and permissions, and also utilizes the buddy data caching mechanism to further reduce the decryption cost.

In the field of information security, blockchain technology’s decentralization and other characteristics and can be very good in solving the crisis of trust in user privacy data security sharing problems. Literature [23] proposes blockchain-based edge computing technology, which realizes both the security protection and integrity checking of data in the cloud, as well as wider multi-party secure computing, while introducing the Paillier cryptosystem, which reduces the computational burden of the terminals under the premise of guaranteeing the operational efficiency. Literature [4] shows that the chained block structure of blockchain provides tamper-resistant data storage and sharing functions and is based on a trusted consensus mechanism that enables it to verify the security of data, however, blockchain still has some privacy issues, and the anonymity and transaction privacy protection of blockchain in the existing cryptographic defense mechanisms are discussed. Literature [11] designs a federated blockchain privacy protection scheme (PDPChain) based on the improved Paillier homomorphic encryption mechanism, which encrypts and stores distributed private cluster data based on fine-grained access control of ciphertext policy attribute-based encryption, and is suitable for storing and sharing large amounts of data. Literature [2] designed a blockchain privacy protection model based on the DEPLEST algorithm, which maintains the local database storage and computational power within the limits of individual user’s device, and ultimately protects the user’s sensitive information through the distributed blockchain and passes the non-sensitive information to the main system to manage the size of the blockchain. Literature [17] proposes a two-stage privacy protection mechanism using the transparency of blockchain technology, firstly using double perturbation local difference privacy algorithm to perturb the location information of the worker, and secondly using edge cloud computing to upload the sensory data of the edge nodes to the blockchain to feed back to the requester, which achieves both the integrity of the sensory data and the privacy protection purpose. Literature [12] evaluates the role of blockchain-based distributed access control system in the user privacy protection problem, and proposes the concept of fog computing and federated chain, which effectively solves the single-point-of-failure problem of data storage by encrypting the data on the edge nodes while providing dynamic and fine-grained access control for the data to achieve privacy protection. Literature [24] emphasizes that the use of centralized access control mechanisms in the cloud can easily lead to tampering or leakage of sensitive data, so a blockchain access control framework AuthPrivacyChain is proposed, which not only blocks illegal access from hackers and administrators, but also protects authorized privacy.

The study is based on blockchain technology to design data integrity auditing method and privacy protection method. On the one hand, an aggregate signature algorithm is constructed by combining user anonymous identity and homomorphic hash function to realize conditional identity privacy protection, and the homomorphic feature is utilized to reduce the burden of auditing computation, and at the same time, three kinds of smart contracts are deployed, which are in charge of recording the metadata and executing the auditing tasks, to build the data integrity auditing method. Several comparison algorithms are also selected to analyze their storage costs and time overheads under different dynamic operations to explore the performance of conditional identity anonymous data auditing methods. On the other hand, the differential privacy mechanism is integrated into the smart contract layer of the blockchain network to realize the process of automatically invoking the chain code to add noise to the data during user uploading. The perturbation of the original data is realized by adding random Laplace noise to the numerical data and adding random response noise conforming to the definition of differential privacy to the binary data in non-numerical data for random flip. The encryption and decryption time analysis of different sizes of data and different complexity of access policies are carried out respectively, and comparative experiments of the algorithms under different stages are conducted to measure the privacy protection performance of the privacy protection method in this paper.

Blockchain technology, as an innovative distributed database technology, has an increasingly obvious application value in secure information storage and transmission. As blockchain has the unique performance of decentralization, non-tampering and high security, it brings a brand-new solution and concept to the traditional information security problem.

First of all, blockchain technology can effectively solve the problem of data leakage, through the use of distributed ledger and encryption algorithms, so that blockchain technology to ensure that the stored data is not interfered with a single point of failure or malicious attacks. All data is strictly encrypted and only allowed to be accessed and modified under specific conditions. This greatly reduces the risk of illegal access or theft of data and improves information security.

Secondly, blockchain technology can enhance the security of data transmission. Traditional data transmission methods often face the risk of interception and tampering, etc. Blockchain technology ensures the integrity and authenticity of the transmitted data through encryption algorithms and consensus mechanisms. The entire transmission data is recorded in the blockchain and jointly verified and maintained by a number of nodes within the network. This ensures that the data is not subject to malicious modification and forgery, and enhances the reliability and security of information transmission.

In addition, blockchain technology has a long-term preservation and backup function, because the blockchain is distributed storage, so the data will be decentralized storage to a number of nodes, and in each node to save a complete copy of the data. This decentralized storage ensures that the data is durable, reliable, and not lost even when some nodes fail or are attacked. At the same time, the blockchain automatically backs up and restores data, reducing the risk of data loss.

With the widespread use of cloud computing technology and the explosive growth of data volumes, it has become particularly important to effectively safeguard the integrity and privacy of outsourced data. However, most of the existing data auditing methods rely on third-party auditors, a practice that not only increases the risk of data leakage and possible malicious behaviors of auditors, but also fails to meet the rising demand for data protection. To address the above challenges, this chapter proposes a blockchain-based conditional identity anonymization data auditing scheme.

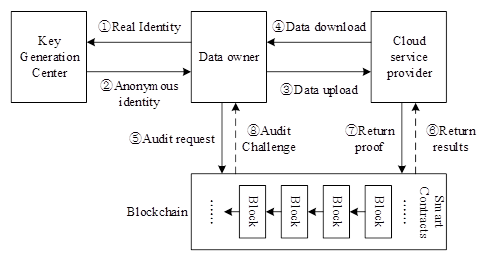

The system model of the blockchain-based data auditing scheme is shown in Figure 1, including four core entities: data owner (DO), key generation center (PKG), blockchain (BC), and cloud service provider (CSP). The specific four core entities are described as follows:

DO: The user first generates the corresponding digital signature and integrity verification auxiliary information for the outsourced data file. Subsequently, it is uploaded to the CPS and the integrity verification auxiliary information is uploaded to the blockchain via a secure channel. Finally, DO deletes the local copy to save storage space.

PKG: Responsible for generating anonymous identity and corresponding key pairs based on DO’s real identity in the initialization phase.

CSP: responsible for storing the outsourced data and their digital signatures, and handling integrity audit challenges from the BC, returning response messages containing audit proofs to the BC.

BC: The blockchain is maintaining a shared ledger among the participants of the decentralized network. The BC automatically records data integrity information and performs periodic cloud data audits via smart contracts.

The blockchain-based conditional identity anonymization data integrity auditing scheme aims to achieve reliable auditing of data integrity and to protect the identity privacy of the data owner from possible misbehavior by malicious third-party auditors. Therefore, the following types of attacks are considered in the data auditing process: forgery attacks, privacy attacks and data recovery attacks.

This program contains \(Setup\), \(Key\; Gen_{PID}\), \(Sig\), \(Challenge\), and \(Verify\), five algorithms in total.

Setup : The algorithm is executed by PKG by entering security parameters \(\xi\) into the system, generating two multiplicative cyclic groups of order prime \(p\), \(G_{1}\), \(G_{2}\), setting up a bilinear mapping: \(G_{1} \times G_{1} \to G_{2}\). Let \(g\) be the generating element of the group \(G_{1}\), setting up two collision-proof hash functions, \(H_{1} :G_{1} \times G_{1} \times \left\{0,1\right\}^{*} \to \left\{0,1\right\}^{\rho }\), \(H_{2} :G_{1} \times \left\{0,1\right\}^{\rho } \to Z_{p}^{*}\), where \(\rho\) denotes the length of the bits of the anonymous identity. And a homomorphic hash function, \(H_{3} :Z_{p} \to G_{1}\). The PKG randomly selects element \(x\leftarrow Z_{p}^{*}\) as the master private key, computes the master public key \(mpk=g^{x}\), and randomly selects element \(\omega \leftarrow G_{1}\). Finally, the PKG securely and secretly saves \(x\) and discloses the system parameters: \[\label{GrindEQ__1_} params=\left(e,G_{1} ,G_{2} ,g,p,\omega ,mpk,H_{1} ,H_{2} ,H_{3} \right). \tag{1}\]

The PKG then computes a signing private key corresponding to the anonymized identity PID using the master private key: \[\label{GrindEQ__5_} SK_{PID} =\left(s+x\right)H_{2} \left(PID\right). \tag{5}\] Finally, the PKG transmits \(\left(PID,SK_{PID} ,Time\right)\) to the DO over a secure channel.

Finally, the DO uploads the data block and digital signature \(\left(\left\{F\left[i\right]\right\}_{1\le i\le n} ,\left\{Sig_{i} \right\}_{1\le i\le n} ,Y\right)\) to the CSP, and uploads the auxiliary audit information \(IVA\) and the anonymized identity \(PID\) to the blockchain’s storage contract for preservation.

After BC receives the integrity audit proof response message \(\left\{\mu ,\eta ,Sig\right\}\), it utilizes \(\lambda\) to determine whether the integrity verification equation is valid or not: \[\label{GrindEQ__16_} \begin{array}{rcl} {e\left(g,Sig\right)} & {=} & {e\left(Y,\lambda \right)\cdot e\left(Y,\lambda \right)} {\cdot e\left(\left(PID_{a} \cdot mpk\right)^{H_{2} \left(PID\right)} ,\mu ^{p-\mu } \cdot \prod _{j=j_{1} }^{j_{m} }H_{2} \left(Fid\left\| PID\right\| i\right)^{v_{j} } \right)}. \end{array} \tag{16}\]

If the validation equation holds, the data outsourced by DO to CPS is complete. Otherwise, the outsourced data is incomplete.

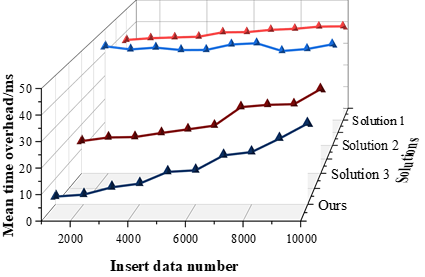

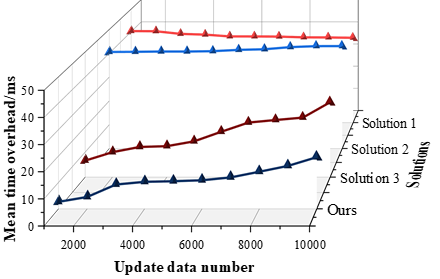

In this section, the performance of the data integrity auditing scheme in this paper is evaluated by doing comparison with other schemes. Scheme 1 is a multi-copy data integrity verification scheme based on spatio-temporal chaos, Scheme 2 is a multi-copy data integrity verification scheme based on identity signature, and Scheme 3 is a blockchain cloud storage integrity auditing scheme based on T-Merkle hash tree. The three schemes are compared in terms of storage cost of blockchain and time overhead required under different dynamic update operations to verify the performance of the schemes in this paper.

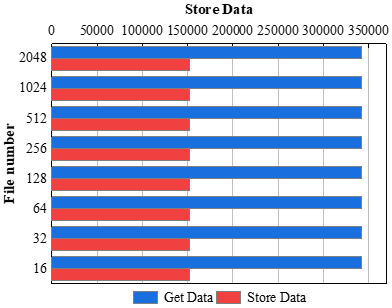

In ethereum, each transaction consumes a certain amount of gas. In this paper, a series of tests are conducted, and the gas consumption of smart contracts with different number of files (from 16 to 2048) is shown in Figure 2. The experimental results show that the number of consumed gases for smart contract storage and retrieval is maintained around 150,000 and 340,000, and the gas consumption of the contract is independent of the number of files stored. Therefore the blockchain storage overhead of the conditional identity anonymized data auditing scheme based on blockchain in this paper is extremely small.

The experimental results show that the required time overhead tends to grow with the increase of inserted data. The average time overhead of this chapter’s scheme with different numbers of inserted data is overall smaller than the other three schemes, with an average time overhead of around 3\(\mathrm{\sim}\)30ms. The average time overhead spent by scheme 3 is increasing with the amount of inserted data, and the average overhead time increases from 13ms to 35ms, and the average time overhead spent by schemes 1 and 2 does not float much at different amounts of inserted data, and stabilizes at about 38ms and 40ms, respectively.

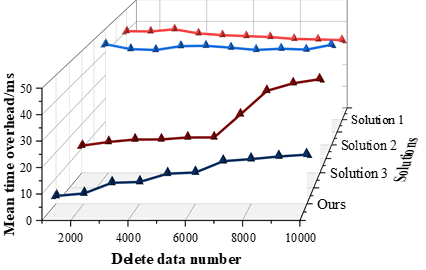

In the experiments of deletion operations, the average time overhead spent on deleting different amounts of data by the four schemes is also compared with the schemes in this chapter. The time overhead during data deletion operation is shown in Figure 4, where the horizontal coordinates indicate the different number of deleted data and the vertical coordinates indicate the time overhead of the corresponding data volume of the deletion operation.

From the experimental results, it can be seen that the average time overhead of this chapter’s scheme at different numbers of deleted data has a significant advantage over the other schemes, and the average time overhead spent in scheme 3 increases with the increase of the amount of deleted data. The average overhead time of scheme 1 to scheme 3 and the blockchain based data integrity auditing scheme in this paper in the experiment are 38.18ms, 41.93ms, 21.26ms and 11.86ms respectively.

Subsequently, the experiments compare the average time overhead spent by the three schemes with the schemes in this chapter when updating different data volumes. The time overhead during the data deletion operation is shown in Figure 5, where the horizontal coordinate indicates the different number of updated data, and the vertical coordinate indicates the time overhead of the corresponding data volume of the update operation. The average overhead time of the blockchain-based data integrity auditing scheme in this paper is 2.5-20ms, and the average overhead time of scheme 1\(\mathrm{\sim}\)scheme 3 with different number of updated data is 37-40ms, 40-43ms, and 6.5-30ms, respectively.Similarly to the above experiments, it is concluded that the scheme of this chapter spends the least amount of time in updating different amount of data. The average time overhead spent by the schemes in this chapter gradually increases with the increase of the amount of data updated, but the schemes in this chapter are still relatively superior in terms of the overall average time overhead.

Blockchain technology is used to build auditable and tamper-proof data storage solutions, especially for datasets with stringent security requirements. However, there are still challenges in protecting personal privacy information in databases, as any node can openly access personal privacy data stored on the blockchain due to the open and transparent nature of the blockchain. Therefore, there is a need to design a blockchain data sharing network architecture that satisfies the privacy and security requirements of multiple parties.

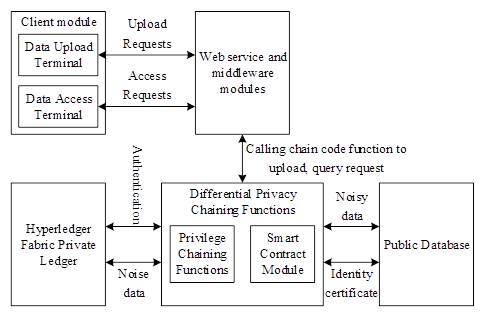

Aiming at the data privacy protection needs in blockchain, this paper designs a blockchain privacy protection model based on differential privacy, and the blockchain privacy protection model is shown in Figure 6. Users can carry out user operations through the data upload terminal and data access terminal of the client module, and make upload and access requests through the Web service with the middleware module. The smart contract module, on the other hand, automatically processes the target user’s request according to the differential privacy chain code function and permission control chain code function installed in the blockchain network. The public data and identity certificates are stored in the public database of the blockchain, which can provide services to all nodes in the network, while the noise data is stored in the private ledger of the Hyperledger Fabric, and only the privileged nodes can use the private data through the authentication by the privilege control function.

Differential privacy algorithm design for data sharing removes attributes such as name, identification and other attributes for anonymization when an individual or organization uploads information to the blockchain network for data sharing, and noise is added to the data by the differential privacy mechanism in the smart contract to scramble the uploaded personal data to prevent differential attacks.

Laplace noise function is for numerical data to add a random number in line with the Laplace distribution: take the random variable \(\alpha \sim UNI\left(0,1\right)\) to meet the uniform distribution, and brought into the inverse function of the Laplace cumulative distribution function, then the noise value can be obtained to meet the conditions of the formula is as follows. \[\label{GrindEQ__17_} F^{-1} \left(x\right)=\left\{\begin{array}{ll} {\lambda \ln \left(2\alpha \right)} & {\alpha <{1\mathord{\left/ {\vphantom {1 2}} \right. } 2} }, \\ {\mu -\lambda \ln \left(2\alpha \right)} & {\alpha >{1\mathord{\left/ {\vphantom {1 2}} \right. } 2} }. \end{array}\right. \tag{17}\]

If the uniform distribution \(\alpha \sim UNI\left(-0.5,0.5\right)\) is taken, the above segmented function can be expressed in the form of an equation where the \(sign\) function is used to obtain the positive and negative of the parameter and the \(abs\) function is used to obtain the absolute value. The noise value is the: \[\label{GrindEQ__18_} F^{-1} \left(x\right)=\mu -\lambda *sign\left(\alpha \right)*\ln \left(1-2*abs\left(\alpha \right)\right) . \tag{18}\] Add the computed random Laplace noise values to the data as in: \[\label{GrindEQ__19_} M\left(D\right)=f\left(D\right)+Lap\left(0,\frac{\Delta f}{\varepsilon } \right) . \tag{19}\]

The privacy budget \(\varepsilon\) is inversely proportional to the size of the added noise value, the smaller the privacy budget, the larger the added noise, the higher the privacy protection strength of the data, and the lower the data usability, and vice versa, the larger the privacy budget, the smaller the added noise, the lower the protection strength of the data but the higher the usability. According to the privacy requirements of a specific dataset, adding a privacy budget that meets the requirements can balance the privacy protection utility level and data availability.

Suppose that the sample of questions and answers are counted and the number of “s” attributes is counted. The proportion of true answers is given as \(\pi\). Assume that the number of people who answered “yes” is \(n_{1}\) and the number of people who answered “no” is \(n_{2}\), and there are: \[\label{GrindEQ__20_} \begin{cases} {P_{r} \left[x_{i} ="yes"\right]=\pi *p+\left(1-\pi \right)*\left(1-p\right)} \\ {P_{r} \left[x_{i} ="no"\right]=\left(1-\pi \right)*p+\pi *\left(1-p\right)} \end{cases} \tag{20}\] Unbiased estimation using the method of great likelihood: \[\label{GrindEQ__21_} L=\left(\pi *p+\left(1-\pi \right)*\left(1-p\right)\right)^{n1} *\left(\left(1-\pi \right)*p+\pi *\left(1-p\right)\right)^{n1} . \tag{21}\] Obtain an unbiased estimate of \(\pi\) for \(\tilde{\pi }\): \[\label{GrindEQ__22_} \tilde{\pi }=\frac{p-1}{2p-1} +\frac{n_{1} }{(2p-1)n} . \tag{22}\] Estimated number of “s” attributes: \[\label{GrindEQ__23_} \tilde{n}=n*\pi =\frac{p-1}{2p-1} n+\frac{n_{1} }{2p-1} . \tag{23}\]

Noise addition using random flipping can effectively protect the original data from theft, and the overall features of the original dataset can still be obtained by unbiased estimation of the data processing.

Users can verify the data by sending verification requests to the endorsing nodes to prevent their uploaded private data from being tampered by other attackers, and the consensus property of the blockchain itself also requires the process of data verification. In algorithm (18), the endorsing node sends its identity information and the data identifier to be verified to the blockchain network through the client, and the smart contract retrieves and calls the noisy data in the private database of the corresponding identifier of the verified data according to the user’s authority, and returns it to the verifier through denoising to complete the security verification of the data and ensure the data consistency of the blockchain network.

Proof: with two neighboring datasets \(D\) and \(D{'}\), and let \(f\left(\cdot \right)\) be the feature correlation function on the neighboring datasets, and \(M\) denote the correlation feature computed for any one record, the global sensitivity formula is as follows: \[\label{GrindEQ__24_} \Delta f_{M} ={\mathop{\max }\limits_{D,D'}} \left\| f_{M} \left(D\right)-f_{M} \left(D'\right)\right\| _{I}. \tag{24}\] According to the definition of Laplace distribution, the probability density function of DPNA algorithm is as follows: \[\label{GrindEQ__25_} \begin{array}{rcl} {P_{r} \left[D\right]} & {=} & {\frac{1}{2b} \exp \left(-\frac{\left|f_{M} \left(D\right)-M\right|}{b} \right)} {=\frac{\varepsilon }{2\Delta f_{M} } \exp \left(-\frac{\varepsilon \left|f_{M} \left(D\right)-M\right|}{\Delta f_{M} } \right)}. \end{array} \tag{25}\]

Thus the ratio of the probability density functions of the results computed by the DPNA algorithm on two neighboring datasets is as follows: \[\begin{aligned} \label{GrindEQ__26_} \frac{P_M(D)}{P_M(D')} &= \prod_{j=1}^{d} \exp \left( \frac{\varepsilon |f_M(D)|_j – M_j|}{\Delta f_M} \right) \bigg/ \exp \left( \frac{\varepsilon |f_M(D')|_j – M_j|}{\Delta f_M} \right) \notag\\ &= \prod_{j=1}^{d} \exp \left( \frac{\varepsilon \left( |f_M(D')|_j – M_j \right) – \left( \varepsilon |f_M(D)|_j – M_j \right)}{\Delta f_M} \right) \notag\\ &\leq \prod_{j=1}^{d} \exp \left( \frac{\varepsilon |f_M(D)_j – f_M(D')_j|}{\Delta f_M} \right) \notag\\ &= \exp \left( \frac{\varepsilon ||f_M(D) – f_M(D')||_1}{\Delta f_M} \right) \notag\\ &\leq \exp(\varepsilon) \end{aligned} \tag{26}\]

Therefore, the above satisfies the definition of differential privacy, i.e., the DPNA algorithm satisfies \(\varepsilon -\)differential privacy when the given privacy budget is \(\varepsilon\). Thus, the proof is complete.

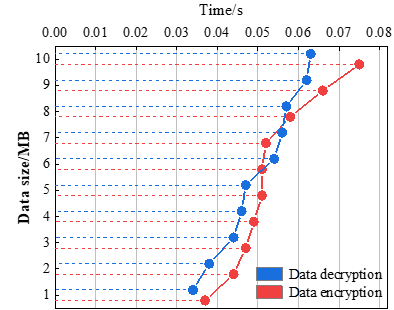

Experiment 1. The experiment sets up data files of different sizes, uses the differential privacy algorithm to perform encryption and decryption operations on the data, and takes the final computation time as the basis for studying the relationship between data size and data encryption and decryption cost.

The encryption and decryption times for data files of different sizes are shown in Figure 7. With the increase of data size, the length of encryption and decryption are all in a linear growth trend. In the actual super ledger transaction, a single block can hold a maximum of 10MB of data, so the data is a maximum of 10MB, at this time, the encryption time is about 0.075s or so, and the decryption time is about 0.063s. According to the encryption and decryption time length obtained from the experiment, this paper finds that the realization time of these two operations are within the acceptable range of the user, so this scheme has good feasibility.

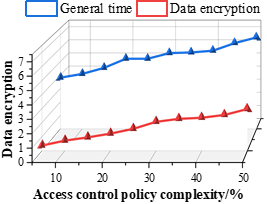

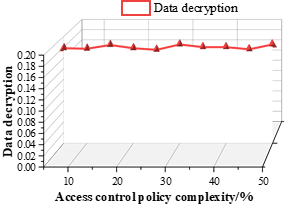

Experiment 2. Setting different degrees of access control policies, obtaining the running time of the differential privacy algorithm for access control policies of different complexity and the overall running time of the scheme, and investigating the effect of the complexity of data access control policies on the running time.

The computation time under access control policies of different complexity is shown in Figure 8, where 8a is the data encryption time length and 8b is the data decryption time length. As the complexity of the access policy increases, the time consumption of the encryption process increases, and the overall running time of the scheme increases, and the data encryption time and the overall running time of the scheme in the experiments are below 3s and 7s, respectively. Therefore, the time overhead of the blockchain privacy protection scheme based on differential privacy is small in the whole chain phase, even when the complexity of the access control policy is as high as 50%, its computation time is only 2.97s, and the realized time is all within the acceptable range. Moreover, in Figure 8b, the decryption speed is independent of the policy complexity. Due to the different strategy complexity of the decryption process, the decryption time consumption varies slightly with the additive access strategy complexity in the test, and the time cost stabilizes around 0.18s, which minimizes the overall time consumption.

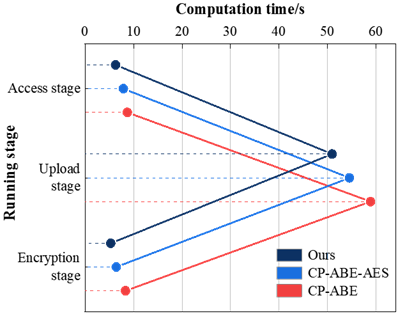

Experiment 3. In order to better compare the overall time consumption of the scenarios in terms of time consumption at each stage, the overall time overhead of the test scenario is combined with the actual situation, the experiments are set to upload and download files with a size of 600 MB, respectively, using the CP-ABE algorithm, the CP-ABE-AES algorithm and the differential privacy algorithm of this paper for the overall time consumption test, and the three algorithms are used for the overall time consumption test, according to the running time of the algorithms Compare the performance advantages and disadvantages.

The time consumed by the algorithms under different stages is shown in Figure 9. The computation time of all stages of this paper’s differential privacy algorithm is smaller than the comparison algorithm. First of all, the data encryption and decryption duration of all 3 algorithms is controlled within 10s, whether it is the data encryption phase or the data access phase, the time overhead of the differential privacy algorithm used in this scheme is less than the comparison algorithm. Therefore, the differential privacy algorithm is considered to have better performance and provide more efficient services to users. Secondly, in the whole scheme, the most time-consuming is the data transmission process, which is basically maintained at about 50\(\mathrm{\sim}\)60s for the 3 schemes, and since the file size used in this case is 600MB, it needs to be transmitted several times, and although the transmission process is affected by the bandwidth, network latency, etc., the experimental data obtained has no degradation in terms of performance performance. In conclusion, the blockchain privacy protection scheme based on differential privacy has a significant speed advantage in uploading large data files at all stages of the data.

In the context of the current digital era, data security and privacy protection have risen to be the core issues of common global concern. In this context, the study proposes a data integrity auditing scheme with conditional identity anonymization based on blockchain technology. Meanwhile, a blockchain privacy protection model based on differential privacy is proposed for the privacy protection of shared data in blockchain networks. The two methods are evaluated through experiments, and the main results are as follows:

(1) The blockchain storage overhead of the data integrity auditing scheme in this paper is small, and the time overhead under different dynamic operations is lower than other methods. In the process of inserting data, deleting data and updating data, the average time overhead of this paper’s scheme is 3\(\mathrm{\sim}\)30ms, 3\(\mathrm{\sim}\)20ms and 2.5\(\mathrm{\sim}\)20ms, respectively.It confirms that this scheme outperforms other schemes in terms of blockchain storage cost and computation overhead.

(2) The encryption and decryption durations of the differential privacy-based blockchain privacy protection method are lower than 0.075s and 0.063s for different sizes of data files, the encryption durations and the total runtime in different complexity access policies are lower than 3s and 7s, respectively, and the processing times in different runtime phases are smaller than those of the comparison methods. Therefore, it can be considered that the blockchain privacy protection method based on differential privacy has better performance and can provide more efficient and convenient services.

The blockchain technology has great potential in data integrity and privacy protection, but at the same time, it also needs to pay attention to the challenges and problems it faces. In the future, with the continuous development and improvement of blockchain technology, it is believed that it will bring more extensive applications and breakthroughs in the field of network security.

Yihui Deng was born in Zhanjiang, Guangdong, P.R. China, in 1990. He received the bachelor’s degree from Jiangxi University of Technology, P.R. China. Now, he works in Experimental Training Center, Guangzhou College of Applied Science and Technology. My main research direction is Computer networks and information security.

Sanxiang Xiao was born in October 1986. He received the Master degree from Central South University of Forestry and Technology, P.R. China. Now, he works in School of Computer Science, Guangzhou College of Applied Science and Technology. My main research direction is Computer networks and artificial intelligence.

The authors declare that they have no conflicts of interest.